Recently, GEO Jobe explored image-based neural networks with GIS technology and maps. Convolutional Neural Networks, or CNNs, are a machine learning application that utilizes imagery to train a model to detect and classify objects in other imagery datasets.

“Computer vision (CV) enables computers and systems to derive meaningful information from digital images, videos, and other visual input – and take actions or make recommendations based on that information.” (Quoted from IBM) IBM wrote it perfectly, but put simply, CV converts images into computer-readable data, which the CNNs turn into advanced insights to drive decisions and improve understanding

Toolbox

The project we took on was a simple method of identifying vegetation and utility infrastructure, with the goal of being able to discern, via high-elevation imagery, potentially problematic areas. The two sources of imagery utilized were drone imagery, captured in 2007 in Jackson, Mississippi, as well as 2020 high-resolution satellite imagery provided by MARIS. The development environment was primarily out of ArcGIS Pro. Pro Notebooks are an invaluable tool for script writing, especially if creating a neural network from scratch. Setting up the environment can definitely be tricky, however, so I will briefly list some of the main resources used and requirements.

- Deep Learning Libraries

- Make sure these are installed correctly for your current version of ArcGIS Pro.

- Required Plugins : Image Analyst , Spatial Analyst

- A Cloned Python environment with desired TensorFlow and Keras versions

- (Optional) CUDA-enabled GPU (NVIDIA)

Data Prep

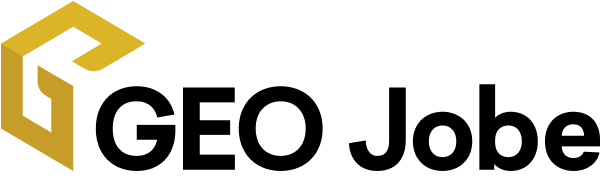

The map data provided was initially separate image sets. Both of the test areas needed to be formatted to one raster layer by creating a new raster layer and adding the images, as well as matching the number of Bands. For example, the 2020 Marion county imagery was converted from 5-band to 3-band imagery to match the training model. The area was then trimmed to a subsection for labeling, learning, and training, to accelerate runtime. For supervised learning, a classified dataset is required. Esri provides the Label Objects for Deep Learning tool under Image Classification in the Imagery tab on the ribbon, which was used to manually annotate a few different objects to classify, along with different methods such as rectangle vs polygon. Hundreds of manually labeled objects are exported as TIFF files to be used as training data.

Notebook Method

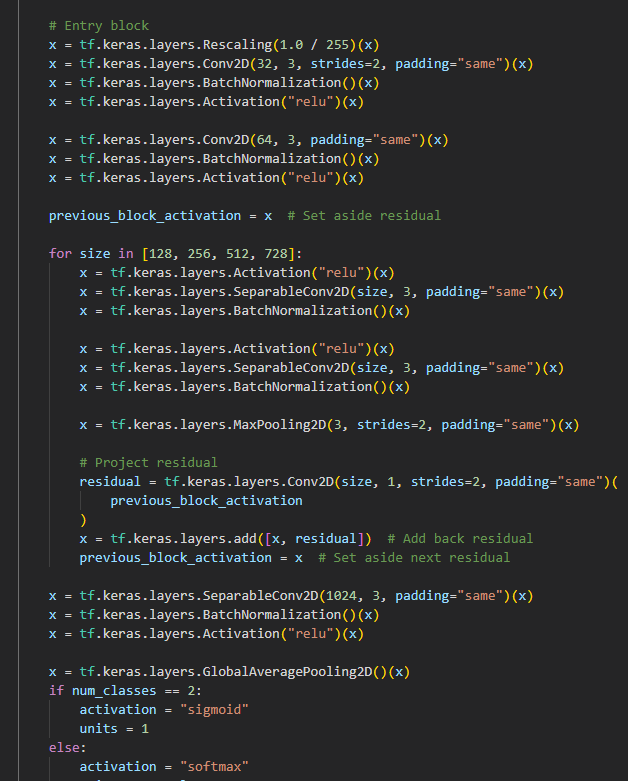

The first approach used was to create the model in ArcGIS Notebooks. The dataset was split into training and testing sets, and the first model was created. Starting iteratively with trying small variations of different layers and data preparations, the model was trained up to high 60s in accuracy, with good loss metrics. The model evolved into a semi-hyperparameterized version, in which a method iterated over the many combinations of layers and parameter specifications that was run overnight to determine the best parameters. Tensorflow (TF) has built-in methods for determining model metrics and evaluations, loss values, and more, which were used in the decision. We attempted to use KerasTuner to perform hyperparameter tuning, but ran into issues getting the versions to cooperate with the tensorflow version allowed by ArcGIS Pro, so it was abandoned.

ArcGIS Pro built-in methods

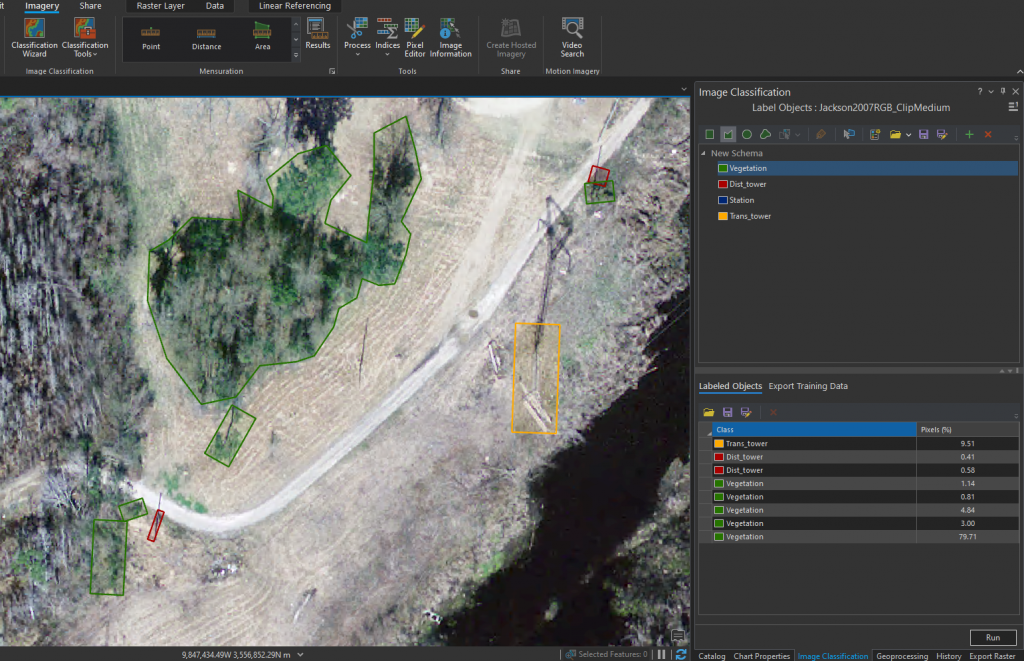

The alternative to notebooks was to use the Train Deep Learning Model directly. The previously exported training data was fed in, and Batch Size set to 32 (set lower if not a heavy processing machine), and learning rate left for self-determination. In the Environment tab, it is important to set the Processor Type to GPU, to vastly accelerate the learning process. Various model types and Backbone models were tried and the results analyzed. Similar to the backbone model parameter specification, Transfer Learning can also be utilized here by providing a pre-trained model to the Train Deep Learning Model tool. Using a close model on new data can vastly improve both quality and speed. Once the model was trained, Detect Objects Using Deep Learning, again setting the Processor Type to GPU, batch size to the machine-appropriate value, and enabling Non-Maximum Suppression with an overlap ratio of 0.25, to allow overlap in classes such as trees near or on utilities.

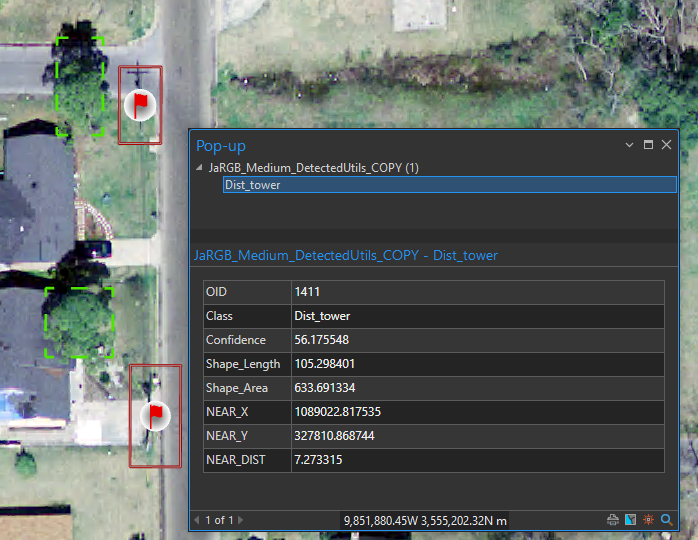

Finally, potentially problematic utility locations were annotated with the Near tool with symbology applied that scales in size to more closely coupled areas of concern.

Findings, Challenges, and Conclusions

Overall, utilizing notebooks and writing the neural network manually provides far more control over the model and deterministic variables, as well as data preprocessing, however it can be more challenging to integrate into a workflow and requires more experience. ArcGIS Pro’s tools provide simplicity and quick visualizations with ease, allowing deep learning and image classification to be obtainable by any user.

RetinaNet, YOLOv3, and FasterRCNN proved to be the optimal model choices for this dataset. In the end, vegetation proved to be difficult to consistently assess in formats other than bounding boxes, which often poorly captured bulk areas. DeepForest is a library that helps with this problem and more accurately bounds vegetation as an individual feature layer.

Finally, higher quality and finer granularity of images plays an important role on model accuracy. Satellite images are easy to get, but modern drone collection would provide the best results, as the accuracy of 2007 drone-imagery captured without high resolution in mind was fairly comparable to 2020 high resolution satellite imagery, falling only just short.

More Resources

A Guide to Convolution Arithmetic for Deep Learning